In the traditional view of the foundations of mathematics one imagines that axioms—say stated in terms of symbolic expressions—are in some sense the lowest level of mathematics. But thinking in terms of the ruliad suggests that in fact there is a still-lower “ur level”—a kind of analog of machine code in which everything, including axioms, is broken down into ultimate “raw computation”.

Take an axiom like x∘y=(y∘x)∘y, or, in more precise computational language:

Compared to everything we’re used to seeing in mathematics this looks simple. But actually it’s already got a lot in it. For example, it assumes the notion of a binary operator, which it’s in effect naming “∘”. And for example it also assumes the notion of variables, and has two distinct pattern variables that are in effect “tagged” with the names x and y.

So how can we define what this axiom ultimately “means”? Somehow we have to go from its essentially textual symbolic representation to a piece of actual computation. And, yes, the particular representation we’ve used here can immediately be interpreted as computation in the Wolfram Language. But the ultimate computational concept we’re dealing with is more general than that. And in particular it can exist in any universal computational system.

Different universal computational systems (say particular languages or CPUs or Turing machines) may have different ways to represent computations. But ultimately any computation can be represented in any of them—with the differences in representation being like different “coordinatizations of computation”.

And however we represent computations there is one thing we can say for sure: all possible computations are somewhere in the ruliad. Different representations of computations correspond in effect to different coordinatizations of the ruliad. But all computations are ultimately there.

For our Physics Project it’s been convenient to use a “parametrization of computation” that can be thought of as being based on rewriting of hypergraphs. The elements in these hypergraphs are ultimately purely abstract, but we tend to talk about them as “atoms of space” to indicate the beginnings of our interpretation.

It’s perfectly possible to use hypergraph rewriting as the “substrate” for representing axiom systems stated in terms of symbolic expressions. But it’s a bit more convenient (though ultimately equivalent) to instead use systems based on expression rewriting—or in effect tree rewriting.

At the outset, one might imagine that different axiom systems would somehow have to be represented by “different rules” in the ruliad. But as one might expect from the phenomenon of universal computation, it’s actually perfectly possible to think of different axiom systems as just being specified by different “data” operated on by a single set of rules. There are many rules and structures that we could use. But one set that has the benefit of a century of history are S, K combinators.

The basic concept is to represent everything in terms of “combinator expressions” containing just the two objects S and K. (It’s also possible to have just one fundamental object, and indeed S alone may be enough.)

It’s worth saying at the outset that when we go this “far down” things get pretty non-human and obscure. Setting things up in terms of axioms may already seem pedantic and low level. But going to a substrate below axioms—that we can think of as getting us to raw “atoms of existence”—will lead us to a whole other level of obscurity and complexity. But if we’re going to understand how mathematics can emerge from the ruliad this is where we have to go. And combinators provide us with a more-or-less-concrete example.

Here’s an example of a small combinator expression

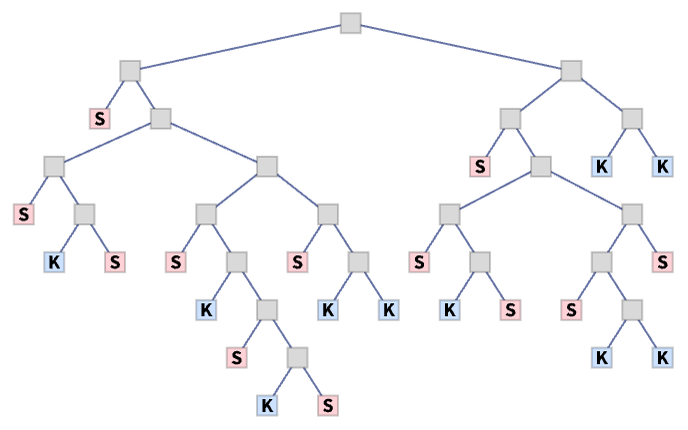

which corresponds to the “expression tree”:

We can write the combinator expression without explicit “function application” [...] by using a (left) application operator

and it’s always unambiguous to omit this operator, yielding the compact representation:

By mapping S, K and the application operator to codewords it’s possible to represent this as a simple binary sequence:

But what does our combinator expression mean? The basic combinators are defined to have the rules:

These rules on their own don’t do anything to our combinator expression. But if we form the expression

which we can write as

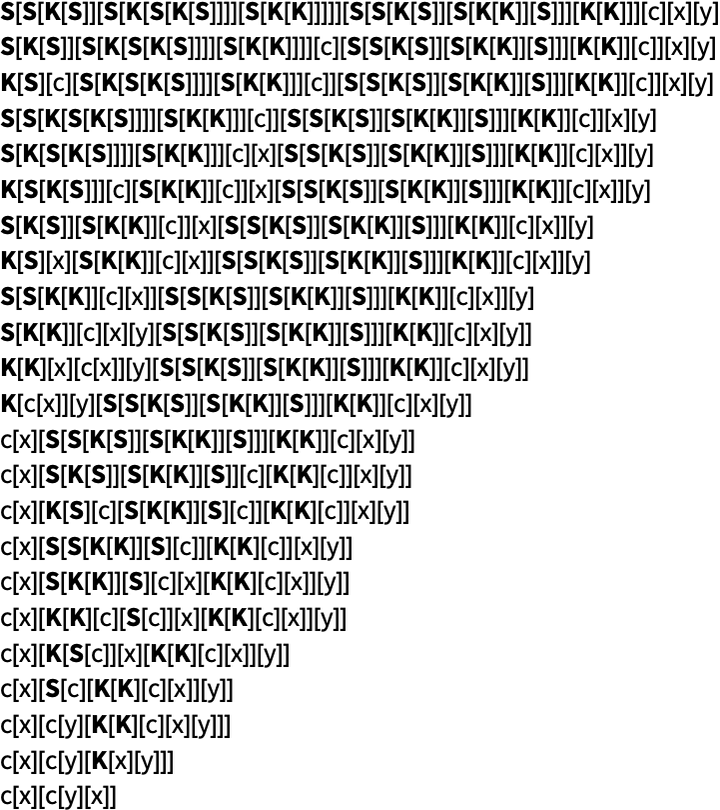

then repeated application of the rules gives:

We can think of this as “feeding” c, x and y into our combinator expression, then using the “plumbing” defined by the combinator expression to assemble a particular expression in terms of c, x and y.

But what does this expression now mean? Well, that depends on what we think c, x and y mean. We might notice that c always appears in the configuration c[_][_]. And this means we can interpret it as a binary operator, which we could write in infix form as ∘ so that our expression becomes:

And, yes, this is all incredibly low level. But we need to go even further. Right now we’re feeding in names like c, x and y. But in the end we want to represent absolutely everything purely in terms of S and K. So we need to get rid of the “human-readable names” and just replace them with “lumps” of S, K combinators that—like the names—get “carried around” when the combinator rules are applied.

We can think about our ultimate expressions in terms of S and K as being like machine code. “One level up” we have assembly language, with the same basic operations, but explicit names. And the idea is that things like axioms—and the laws of inference that apply to them—can be “compiled down” to this assembly language.

But ultimately we can always go further, to the very lowest-level “machine code”, in which only S and K ever appear. Within the ruliad as “coordinatized” by S, K combinators, there’s an infinite collection of possible combinator expressions. But how do we find ones that “represent something recognizably mathematical”?

As an example let’s consider a possible way in which S, K can represent integers, and arithmetic on integers. The basic idea is that an integer n can be input as the combinator expression

which for n=5 gives:

But if we now apply this to [S][K] what we get reduces to

which contains 4 S’s.

But with this representation of integers it’s possible to find combinator expressions that represent arithmetic operations. For example, here’s a representation of an addition operator:

At the “assembly language” level we might call this plus, and apply it to integers i and j using:

But at the “pure machine code” level 1+2 can be represented simply by

which when applied to [S][K] reduces to the “output representation” of 3:

As a slightly more elaborate example

represents the operation of raising to a power. Then 23 becomes:

Applying this to [S][K] repeated application of the combinator rules gives

eventually yielding the output representation of 8:

We could go on and construct any other arithmetic or computational operation we want, all just in terms of the “universal combinators” S and K.

But how should we think about this in terms of our conception of mathematics? Basically what we’re seeing is that in the “raw machine code” of S, K combinators it’s possible to “find” a representation for something we consider to be a piece of mathematics.

Earlier we talked about starting from structures like axiom systems and then “compiling them down” to raw machine code. But what about just “finding mathematics” in a sense “naturally occurring” in “raw machine code”? We can think of the ruliad as containing “all possible machine code”. And somewhere in that machine code must be all the conceivable “structures of mathematics”. But the question is: in the wildness of the raw ruliad, what structures can we as mathematical observers successfully pick out?

The situation is quite directly analogous to what happens at multiple levels in physics. Consider for example a fluid full of molecules bouncing around. As we’ve discussed several times, observers like us usually aren’t sensitive to the detailed dynamics of the molecules. But we can still successfully pick out large-scale structures—like overall fluid motions, vortices, etc. And—much like in mathematics—we can talk about physics just at this higher level.

In our Physics Project all this becomes much more extreme. For example, we imagine that space and everything in it is just a giant network of atoms of space. And now within this network we imagine that there are “repeated patterns”—that correspond to things like electrons and quarks and black holes.

In a sense it is the big achievement of natural science to have managed to find these regularities so that we can describe things in terms of them, without always having to go down to the level of atoms of space. But the fact that these are the kinds of regularities we have found is also a statement about us as physical observers.

And the point is that even at the level of the raw ruliad our characteristics as physical observers will inevitably lead us to such regularities. The fact that we are computationally bounded and assume ourselves to have a certain persistence will lead us to consider things that are localized and persistent—that in physics we identify for example as particles.

And it’s very much the same thing in mathematics. As mathematical observers we’re interested in picking out from the raw ruliad “repeated patterns” that are somehow robust. But now instead of identifying them as particles, we’ll identify them as mathematical constructs and definitions. In other words, just as a repeated pattern in the ruliad might in physics be interpreted as an electron, in mathematics a repeated pattern in the ruliad might be interpreted as an integer.

We might think of physics as something “emergent” from the structure of the ruliad, and now we’re thinking of mathematics the same way. And of course not only is the “underlying stuff” of the ruliad the same in both cases, but also in both cases it’s “observers like us” that are sampling and perceiving things.

There are lots of analogies to the process we’re describing of “fishing constructs out of the raw ruliad”. As one example, consider the evolution of a (“class 4”) cellular automaton in which localized structures emerge:

Underneath, just as throughout the ruliad, there’s lots of detailed computation going on, with rules repeatedly getting applied to each cell. But out of all this underlying computation we can identify a certain set of persistent structures—which we can use to make a “higher-level description” that may capture the aspects of the behavior that we care about.

Given an “ocean” of S, K combinator expressions, how might we set about “finding mathematics” in them? One straightforward approach is just to identify certain “mathematical properties” we want, and then go searching for S, K combinator expressions that satisfy these.

For example, if we want to “search for (propositional) logic” we first need to pick combinator expressions to symbolically represent “true” and “false”. There are many pairs of expressions that will work. As one example, let’s pick:

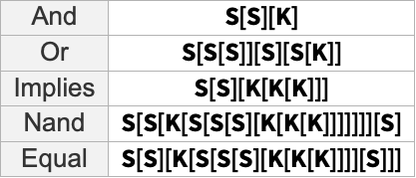

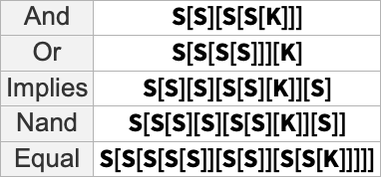

Now we can just search for combinator expressions which, when applied to all possible pairs of “true” and “false” give truth tables corresponding to particular logical functions. And if we do this, here are examples of the smallest combinator expressions we find:

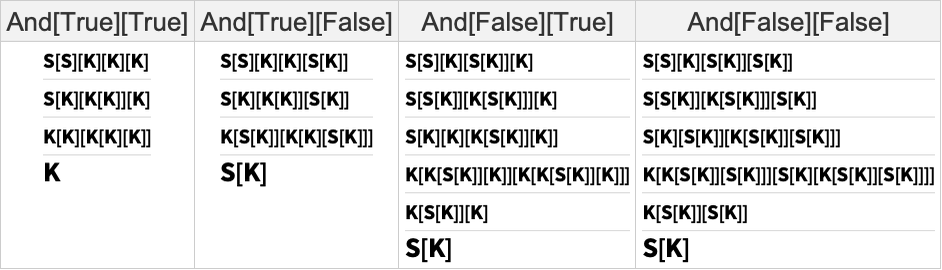

Here’s how we can then reproduce the truth table for And:

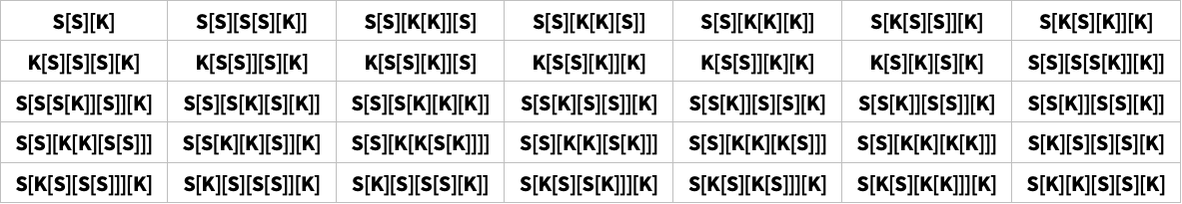

If we just started picking combinator expressions at random, then most of them wouldn’t be “interpretable” in terms of this representation of logic. But if we ran across for example

we could recognize in it the combinators for And, Or, etc. that we identified above, and in effect “disassemble” it to give:

It’s worth noting, though, that even with the choices we made above for “true” and “false”, there’s not just a single possible combinator, say for And. Here are a few possibilities:

And there’s also nothing unique about the choices for “true” and “false”. With the alternative choices

here are the smallest combinator expressions for a few logical functions:

So what can we say in general about the “interpretability” of an arbitrary combinator expression? Obviously any combinator expression does what it does at the level of raw combinators. But the question is whether it can be given a “higher-level”—and potentially “mathematical”—interpretation.

And in a sense this is directly an issue of what a mathematical observer “perceives” in it. Does it contain some kind of robust structure—say a kind of analog for mathematics of a particle in physics?

Axiom systems can be viewed as a particular way to “summarize” certain “raw machine code” in the ruliad. But from the point of a “raw coordinatization of the ruliad” like combinators there doesn’t seem to be anything immediately special about them. At least for us humans, however, they do seem to be an obvious “waypoint”. Because by distinguishing operators and variables, establishing arities for operators and introducing names for things, they reflect the kind of structure that’s familiar from human language.

But now that we think of the ruliad as what’s “underneath” both mathematics and physics there’s a different path that’s suggested. With the axiomatic approach we’re effectively trying to leverage human language as a way of summarizing what’s going on. But an alternative is to leverage our direct experience of the physical world, and our perception and intuition about things like space. And as we’ll discuss later, this is likely in many ways a better “metamodel” of the way pure mathematics is actually practiced by us humans.

In some sense, this goes straight from the “raw machine code” of the ruliad to “human-level mathematics”, sidestepping the axiomatic level. But given how much “reductionist” work has already been done in mathematics to represent its results in axiomatic form, there is definitely still great value in seeing how the whole axiomatic setup can be “fished out” of the “raw ruliad”.

And there’s certainly no lack of complicated technical issues in doing this. As one example, how should one deal with “generated variables”? If one “coordinatizes” the ruliad in terms of something like hypergraph rewriting this is fairly straightforward: it just involves creating new elements or hypergraph nodes (which in physics would be interpreted as atoms of space). But for something like S, K combinators it’s a bit more subtle. In the examples we’ve given above, we have combinators that, when “run”, eventually reach a fixed point. But to deal with generated variables we probably also need combinators that never reach fixed points, making it considerably more complicated to identify correspondences with definite symbolic expressions.

Another issue involves rules of entailment, or, in effect, the metalogic of an axiom system. In the full axiomatic setup we want to do things like create token-event graphs, where each event corresponds to an entailment. But what rule of entailment should be used? The underlying rules for S, K combinators, for example, define a particular choice—though they can be used to emulate others. But the ruliad in a sense contains all choices. And, once again, it’s up to the observer to “fish out” of the raw ruliad a particular “slice”—which captures not only the axiom system but also the rules of entailment used.

It may be worth mentioning a slightly different existing “reductionist” approach to mathematics: the idea of describing things in terms of types. A type is in effect an equivalence class that characterizes, say, all integers, or all functions from tuples of reals to truth values. But in our terms we can interpret a type as a kind of “template” for our underlying “machine code”: we can say that some piece of machine code represents something of a particular type if the machine code matches a particular pattern of some kind. And the issue is then whether that pattern is somehow robust “like a particle” in the raw ruliad.

An important part of what made our Physics Project possible is the idea of going “underneath” space and time and other traditional concepts of physics. And in a sense what we’re doing here is something very similar, though for mathematics. We want to go “underneath” concepts like functions and variables, and even the very idea of symbolic expressions. In our Physics Project a convenient “parametrization” of what’s “underneath” is a hypergraph made up of elements that we often refer to as “atoms of space”. In mathematics we’ve discussed using combinators as our “parametrization” of what’s “underneath”.

But what are these “made of”? We can think of them as corresponding to raw elements of metamathematics, or raw elements of computation. But in the end, they’re “made of” whatever the ruliad is “made of”. And perhaps the best description of the elements of the ruliad is that they are “atoms of existence”—the smallest units of anything, from which everything, in mathematics and physics and elsewhere, must be made.

The atoms of existence aren’t bits or points or anything like that. They’re something fundamentally lower level that’s come into focus only with our Physics Project, and particularly with the identification of the ruliad. And for our purposes here I’ll call such atoms of existence “emes” (pronounced “eemes”, like phonemes etc.).

Everything in the ruliad is made of emes. The atoms of space in our Physics Project are emes. The nodes in our combinator trees are emes. An eme is a deeply abstract thing. And in a sense all it has is an identity. Every eme is distinct. We could give it a name if we wanted to, but it doesn’t intrinsically have one. And in the end the structure of everything is built up simply from relations between emes.