We can think of “ordinary expressions” like a∘b as being like “data”, and rules as being like “code”. But when everything is a symbolic expression, it’s perfectly possible—as we saw above—to “treat code like data”, and in particular to generate rules as output. But this now raises a new possibility. When we “get a rule as output”, why not start “using it like code” and applying it to things?

In mathematics we might apply some theorem to prove a lemma, and then we might subsequently use that lemma to prove another theorem—eventually building up a whole “accumulative structure” of lemmas (or theorems) being used to prove other lemmas. In any given proof we can in principle always just keep using the axioms over and over again—but it’ll be much more efficient to progressively build a library of more and more lemmas, and use these. And in general we’ll build up a richer structure by “accumulating lemmas” than always just going back to the axioms.

In the multiway graphs we’ve drawn so far, each edge represents the application of a rule, but that rule is always a fixed axiom. To represent accumulative evolution we need a slightly more elaborate structure—and it’ll be convenient to use token-event graphs rather than pure multiway graphs.

Every time we apply a rule we can think of this as an event. And with the setup we’re describing, that event can be thought of as taking two tokens as input: one the “code rule” and the other the “data rule”. The output from the event is then some collection of rules, which can then serve as input (either “code” or “data”) to other events.

Let’s start with the very simple example of the rule

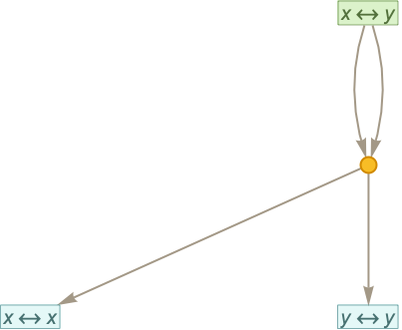

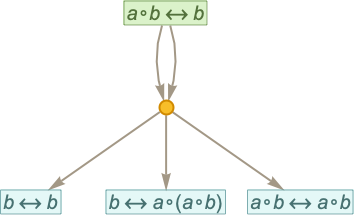

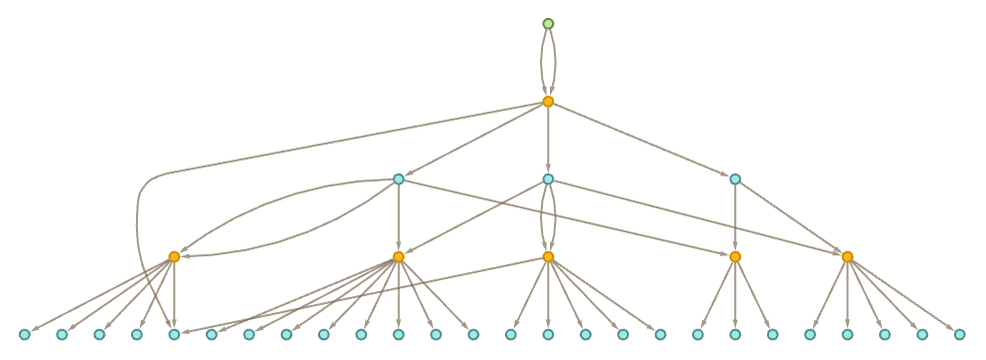

where for now there are no patterns being used. Starting from this rule, we get the token-event graph (where now we’re indicating the initial “axiom” statement using a slightly different color):

One subtlety here is that the xy is applied to itself—so there are two edges going into the event from the node representing the rule. Another subtlety is that there are two different ways the rule can be applied, with the result that there are two output rules generated.

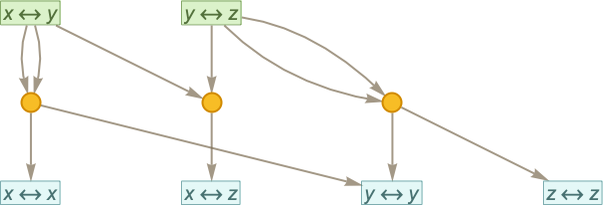

Here’s another example, based on the two rules:

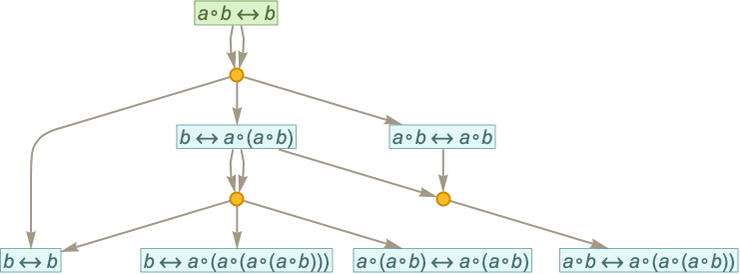

Continuing for another step we get:

Typically we will want to consider as “defining an equivalence”, so that vu means the same as uv, and can be conflated with it—yielding in this case:

Now let’s consider the rule:

After one step we get:

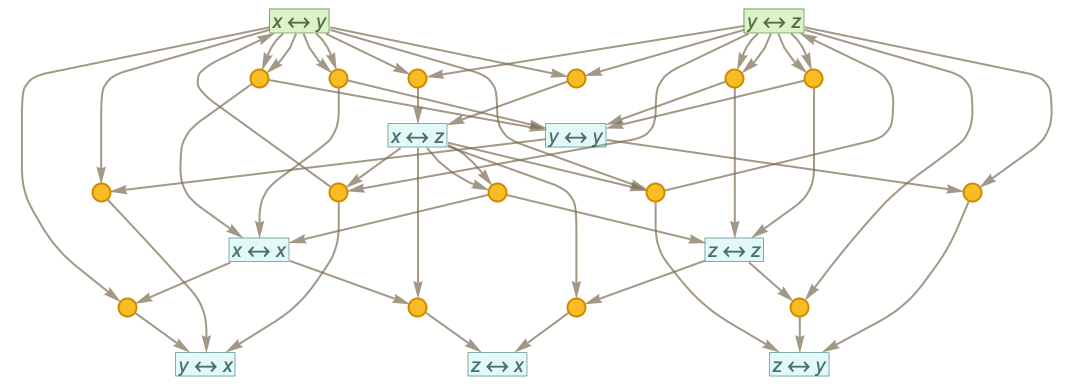

After 2 steps we get:

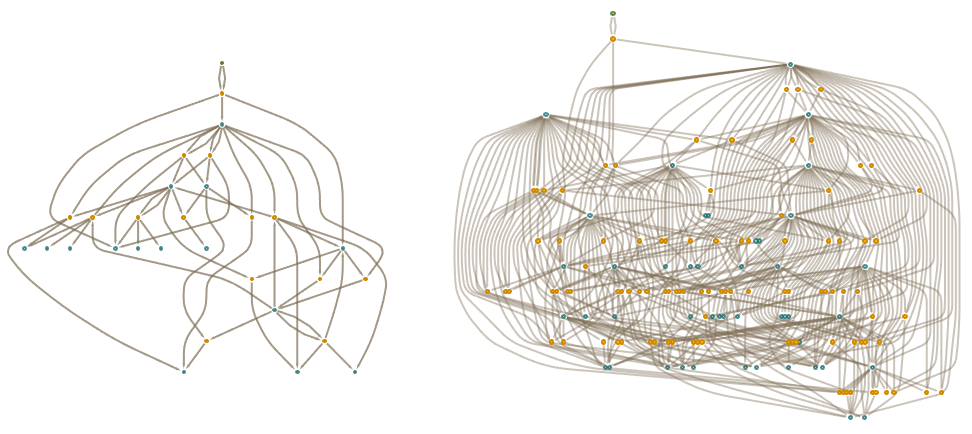

The token-event graphs after 3 and 4 steps in this case are (where now we’ve deduplicated events):

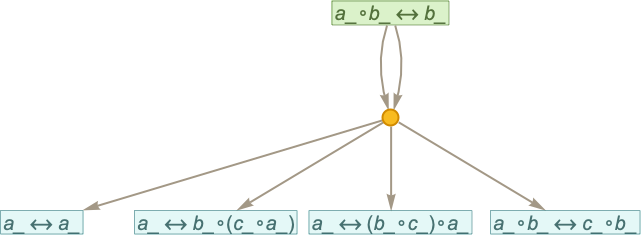

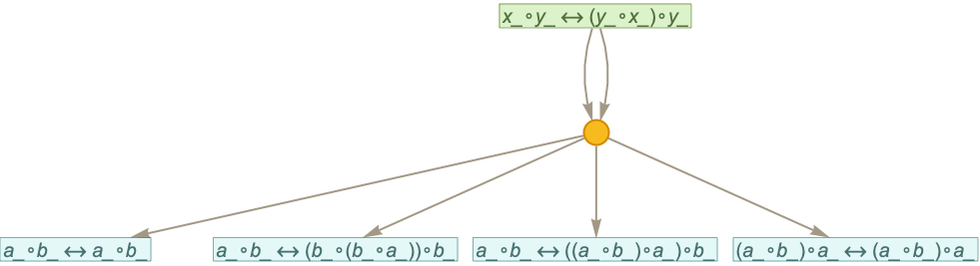

Let’s now consider a rule with the same structure, but with pattern variables instead of literal symbols:

Here’s what happens after one step (note that there’s canonicalization going on, so a_’s in different rules aren’t “the same”)

and we see that there are different theorems from the ones we got without patterns. After 2 steps with the pattern rule we get

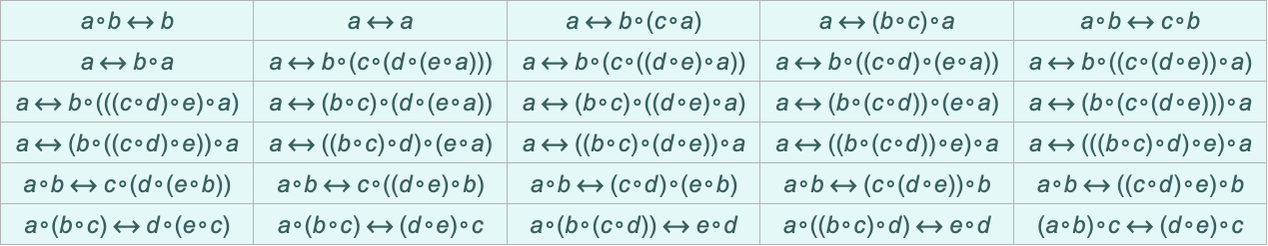

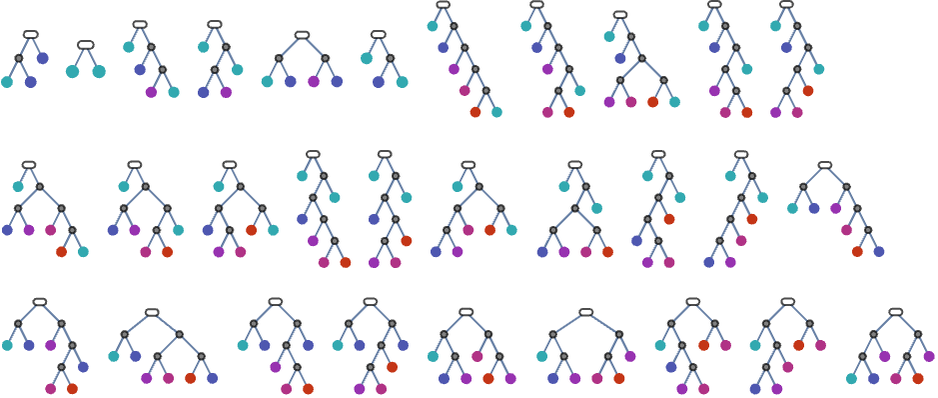

where now the complete set of “theorems that have been derived” is (dropping the _’s for readability)

or as trees:

After another step one gets

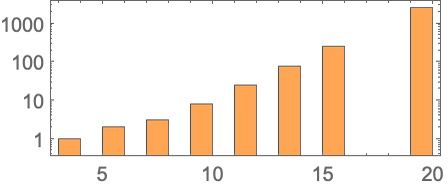

where now there are 2860 “theorems”, roughly exponentially distributed across sizes according to

and with a typical “size-19” theorem being:

In effect we can think of our original rule (or “axiom”) as having initiated some kind of “mathematical Big Bang” from which an increasing number of theorems are generated. Early on we described having a “gas” of mathematical theorems that—a little like molecules—can interact and create new theorems. So now we can view our accumulative evolution process as a concrete example of this.

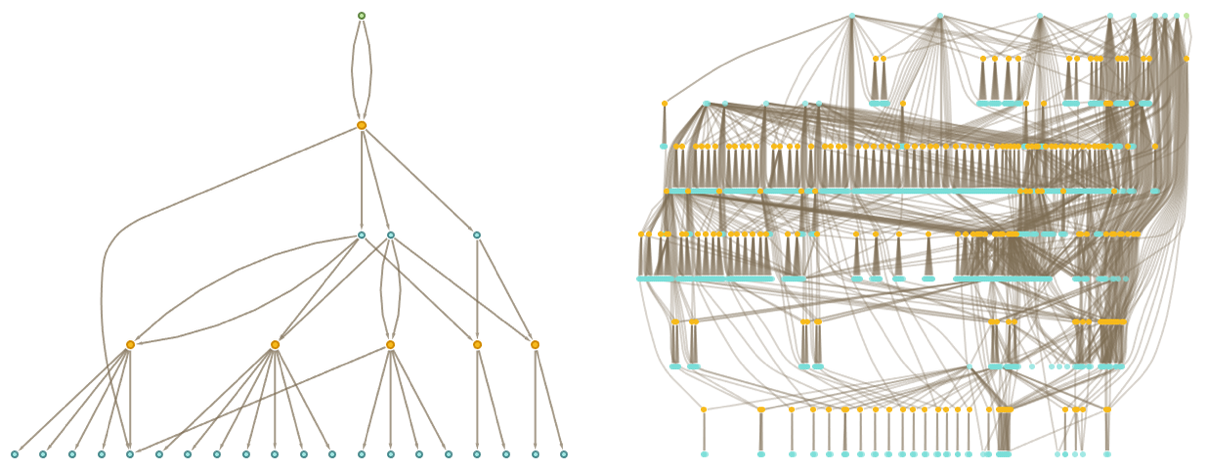

Let’s consider the rule from previous sections:

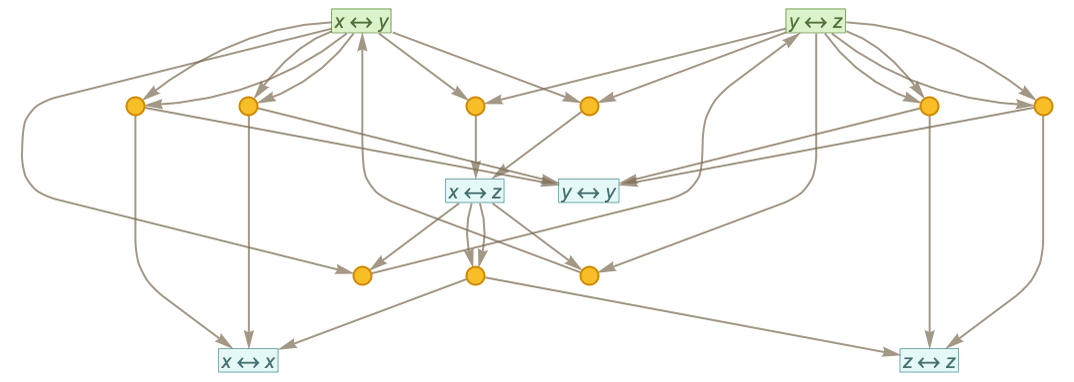

After one step of accumulative evolution according to this rule we get:

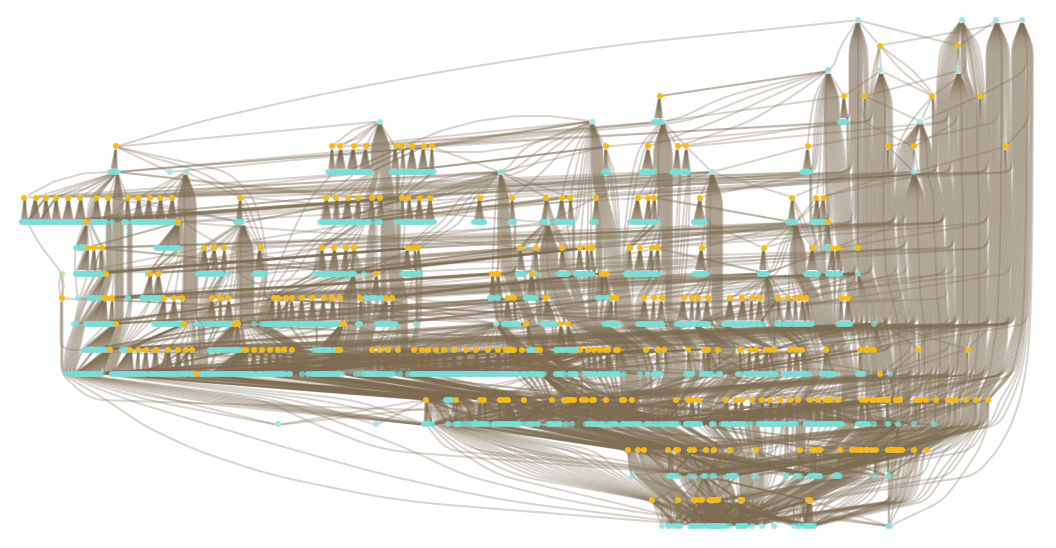

After 2 and 3 steps the results are:

What is the significance of all this complexity? At a basic level, it’s just an example of the ubiquitous phenomenon in the computational universe (captured in the Principle of Computational Equivalence) that even systems with very simple rules can generate behavior as complex as anything. But the question is whether—on top of all this complexity—there are simple “coarse-grained” features that we can identify as “higher-level mathematics”; features that we can think of as capturing the “bulk” behavior of the accumulative evolution of axiomatic mathematics.